How to reduce latency in MainStage

Use advanced settings in MainStage to minimize the amount of latency (delay) you experience when playing software instruments.

When playing a software instrument in MainStage, you might experience a slight delay between when you play a note and when you hear the sound from your speakers or headphones. This delay is called latency and is caused by buffering within your Mac.

Here’s how to quickly optimize your latency settings in MainStage:

In MainStage, load the patch or concert in which you’re experiencing the most latency.

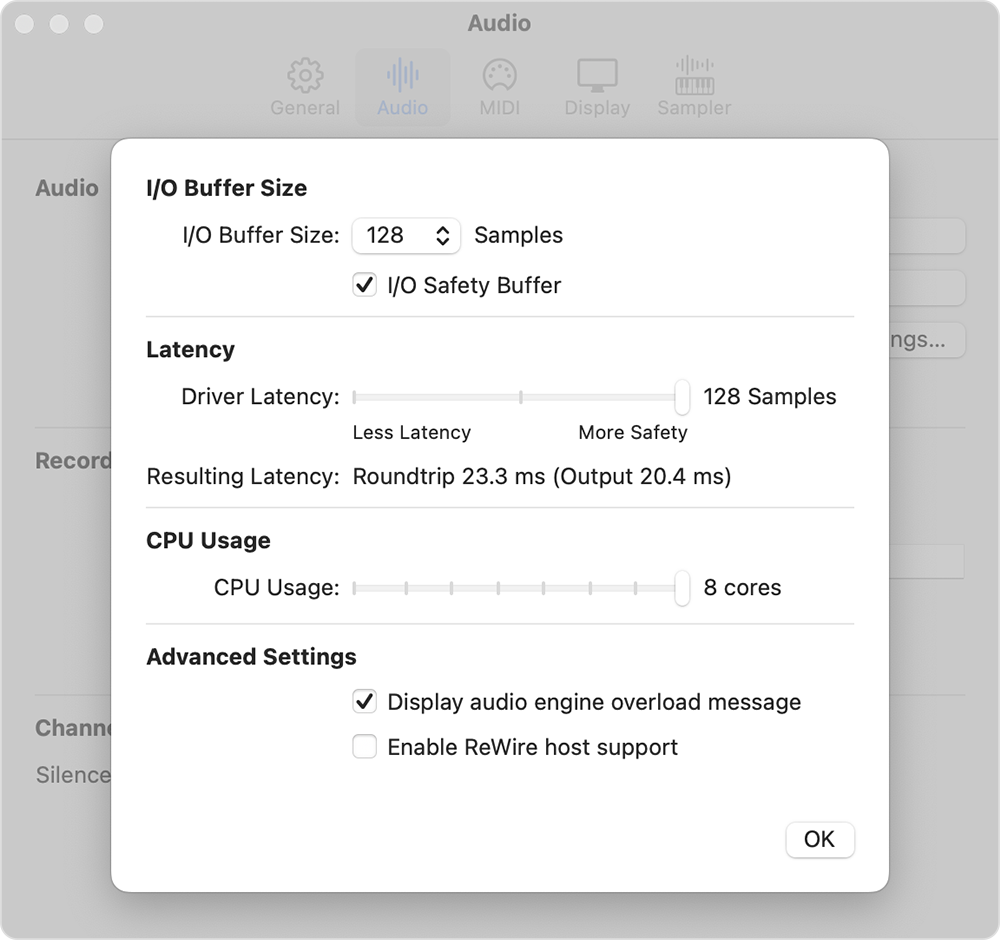

Choose MainStage > Preferences, choose Audio, then click Advanced Settings.

Click the I/O Buffer Size pop-up menu, then choose the lowest number available.

Play some notes. If you hear unwanted audio artifacts like dropouts, pops, or glitches, choose the next highest setting until you don’t hear any audio artifacts.

If the lowest I/O Buffer Size setting you can use without audio artifacts has too much latency for you to perform comfortably, choose the next lowest I/O buffer size, then turn on the I/O Safety Buffer.

Once you have determined the best buffer settings, try lowering the Driver Latency slider to further reduce the overall latency of your system.

Advanced audio settings in MainStage

This section provides more detail on the advanced preference settings in MainStage that affect latency.

I/O Buffer Size

For audio channel strips, the I/O Buffer Size sets both the input and an output buffer size. For software instrument channel strips, the I/O buffer size only sets the output buffer size—there’s no audio input for these channel strips. The buffer size ranges from 16 to 1024 samples.

When you change the I/O Buffer Size setting, you can see how it affects the total amount of input and output latency, which is shown as Roundtrip in milliseconds under the Latency heading. The output latency is also shown in parenthesis. With software instruments, the output latency is the important number.

Lower I/O Buffer Sizes result in less latency, but might induce audio artifacts, especially if you use a lot of plug-ins and channel strips simultaneously. If you want less latency but are experiencing audio artifacts, you can reduce the number of plug-ins and channel strips you’re using simultaneously.

I/O Safety Buffer

When you turn on the I/O Safety Buffer, MainStage adds an additional output buffer to protect against overloads due to unexpected CPU spikes. Its size is equal to the I/O Buffer Size setting, but only affects the output buffer.

For example, if you find there is too much latency with an I/O Buffer Size of 256 samples, but you hear unwanted audio artifacts with an I/O Buffer Size of 128 samples, set the I/O Buffer Size to 128 and turn on the I/O Safety Buffer. This will yield more latency than 128 samples without the Safety Buffer, but less than 256 samples without it.

Driver Latency slider

The Driver Latency slider affects the latency of the Core Audio driver, which then passes the signal to your audio output such as the headphone output on your Mac or an external audio interface. By default the slider is set to the maximum possible value, equal to the current I/O Buffer Size. When you move the slider to the left to reduce driver latency, the latency of the overall roundtrip latency is also reduced.

As with the I/O buffer size, lower Driver Latency settings can lead to unwanted audio artifacts. The minimum setting possible for a particular system is primarily determined by the audio driver. The Driver Latency setting has no effect on the number of plug-ins or channel strips you can run.

Need more help?

Tell us more about what's happening, and we’ll suggest what you can do next.